The BBC, publicly funded by UK tax payers, has announced announced that is developing a new algorithm to implement in its Sounds streaming service.

Allegedly intended for reducing “echo chambers” and for providing a more varied content, BBC’s director of education James Purnell has described the algorithm in London’s Radio Festival by saying that it will be “built to surprise you”. The BBC want to “pop your bubble with unexpected and challenging content on its streaming service”, the BBC reports.

Algorithms are widely used as a tool to feed users with the type of content they are most interested in. They might not have been necessary a few years ago when the web was just an embryo – but today they become almost indispensable. It would be overwhelming to navigate the results of a search if they had not been nicely ordered by an AI.

However, AI-powered content has proven to be capable of echoing information (sometimes even false information) up to a point of ‘critical mass’, out of which all sorts of outcomes may arise.

These “echo chambers” or “filter bubbles” depend on the fact that an algorithm designed to provide “relevant content”, will feed users with exactly what they want to hear – to maximize the time they spend online – reinforcing opinions and beliefs.

However, being funded by the British tax-payer, the BBC are seen as many to lean politically to the left – and an eyebrow is always raised when it’s the BBC that wants to steer the conversation.

Algorithms are nothing more than software – it would be unfair to categorize them in human terms such as “good” or “bad”. The sets of rules that power them are chosen by humans or corporations made of humans. The outcome of an algorithm set to provide “quality content” would be different to the one of an algorithm set for “relevant content” or “maximize attention”, or even “maximize profit”.

There is growing evidence that algorithms are being designed with the priority of grasping and retaining our attention. Content that promotes prejudice is commercially more successful than one that promotes skepticism. When a broadcaster such as BBC decides to spend big amounts of money and effort to implement AI in content feeds, almost certainly it is because it expects big returns.

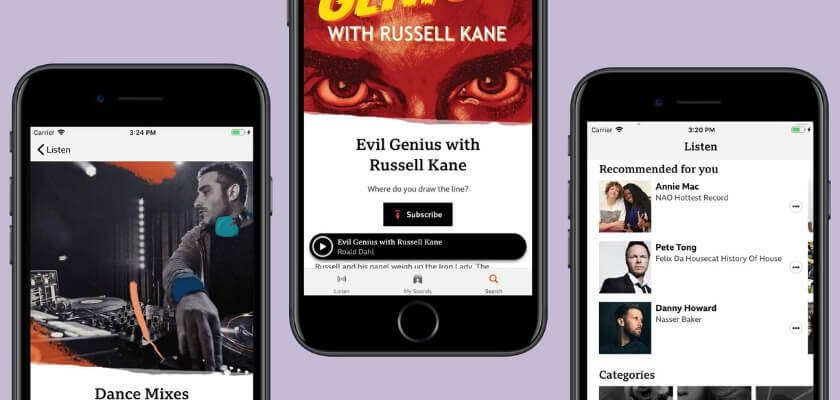

The BBC has already started to forcefully direct traffic to its new Sound platform by making some of its most successful podcasts – such as Jane Garvey and Fi Glover’s Fortunately – exclusive to the BBC app, withholding them from Spotify, iTunes and Overcast for several weeks.

The new algorithm could be just BBC’s try-out in the already well-established war to win people’s views.