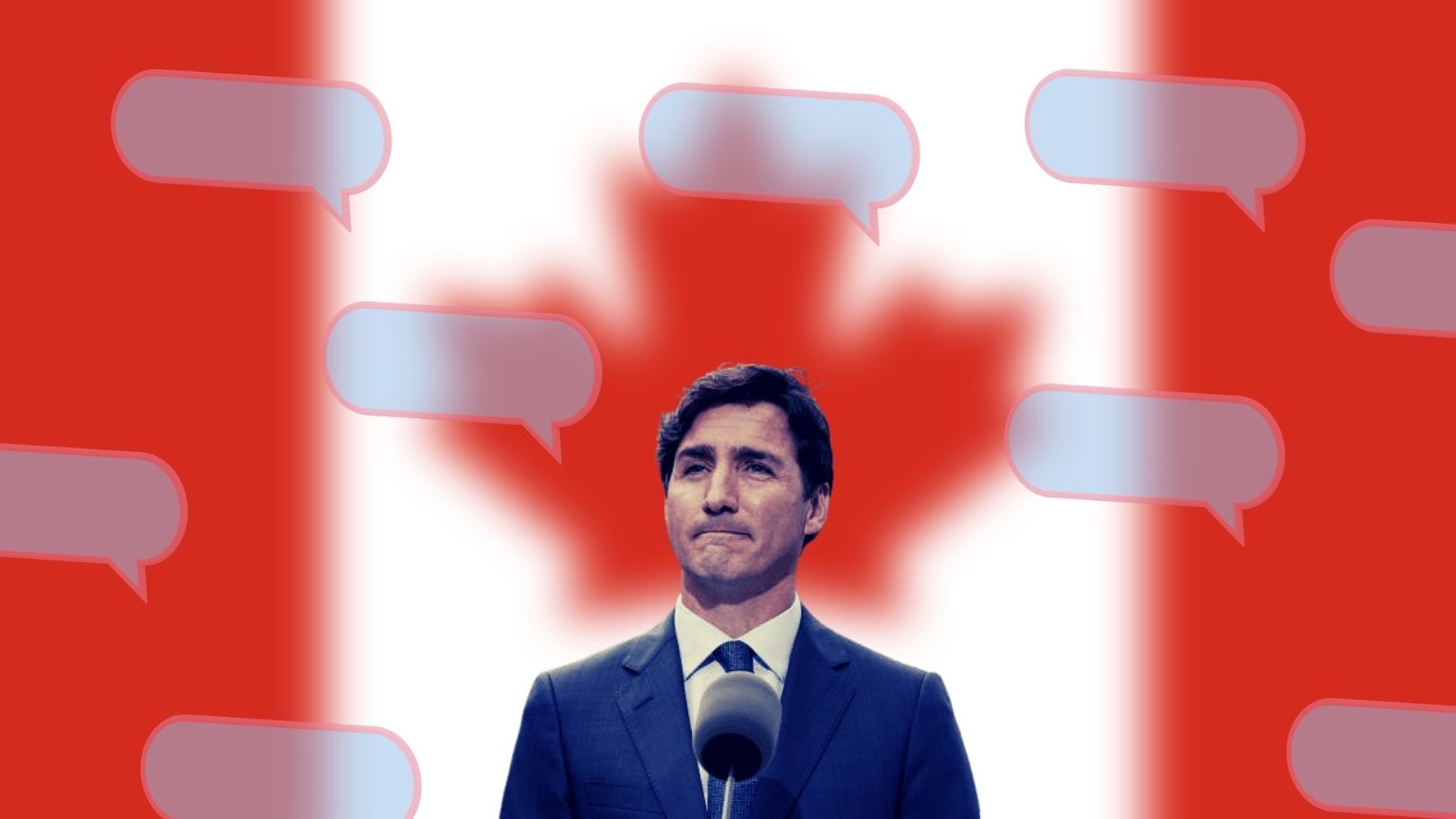

The editorial board of Canada’s Globe and Mail has voiced criticism of the way Prime Minister Justin Trudeau plans to tackle “online harms,” saying that it remains unclear who would be the target of these future laws, or how they would work.

Noting that ahead of last year’s election, Trudeau and his cabinet came up with promises that, if reelected, they would enact legislation that would protect Canadians on the internet, the newspaper’s editors think that what’s become apparent since last fall is that this is easier said than done.

The campaign pledge consisted of two parts: one that had to do with “strengthening” the country’s existing Human Rights Act and the Criminal Code in a way that would allow the authorities to use them in combating online hate by means of treating alleged hate speech as a form of discrimination.

A bill to that effect had already been in parliament, but failed, and this “promise” was thought to be about bringing it back. But that is yet to materialize.

The second part of the announced policy was putting forward new legislation, within the first 100 days of the new cabinet, whose goal would be to combat “serious forms of harmful online content.” That is also yet to happen.

The reason, writes the Globe and Mail, is that Trudeau’s Liberals came up with a technical paper published last summer, that was supposed to outline this legislation, which got privacy advocates up in arms and received significant pushback.

The intent of those behind the future law was to treat obvious criminal acts such as incitement to terrorism or child pornography the same way as “hate speech” – which is subject to interpretation and opinion, and therefore criminalizing it would represent a threat to freedom of speech.

Other contentious details from the technical paper revealed that tech companies behind social media would be forced to block what’s identified as “harmful content” and in some cases report it to the police.

The pushback caused the Canadian government to take a step back and focus on “systems-based approach instead of a content-based model” – with cues for that taken from the EU and the UK.

“It’s a smarter approach, because it’s a more limited approach,” writes the editorial board, adding that it’s “best to leave it (regulating allowed speech) to companies, but have governments impose a duty of care on them.”