Digital scams have become increasingly sophisticated and Google’s latest innovation offers a promising defense mechanism. But, like with all things Google promotes as progress, how it impacts lives and the implications of the technology could become a major civil liberties issue – especially anti-privacy EU officials are likely salivating at the idea of the technology.

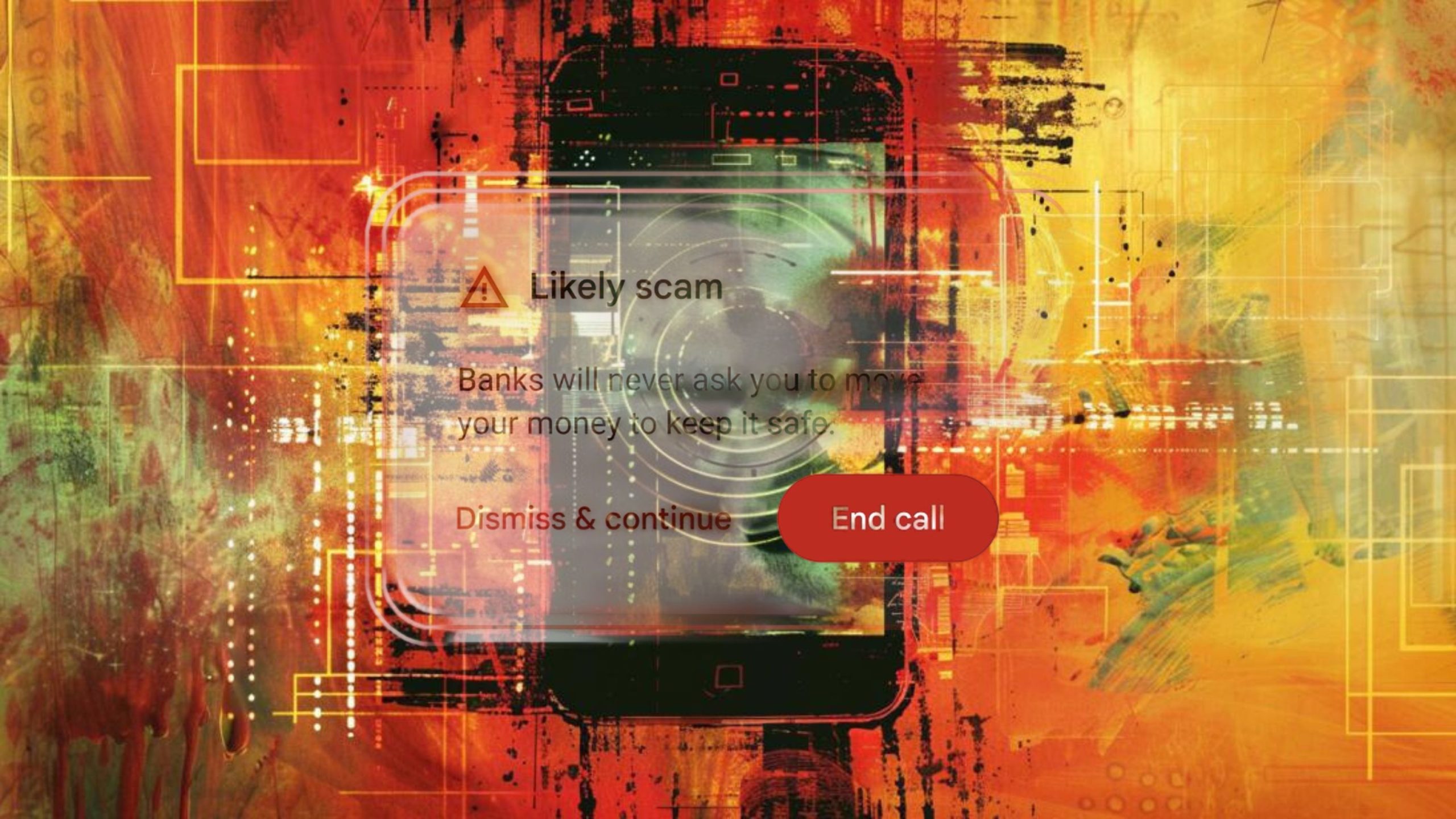

Announced at the I/O developer conference, the company is testing a new call monitoring feature designed to protect Android users from phone scams. This feature leverages Gemini Nano, a streamlined version of Google’s Gemini large language model, which can run locally on devices to detect fraudulent language and patterns during calls, alerting users in real time. While this development is a significant step forward in combating scams, it also raises crucial questions about privacy and the potential for broader applications that could infringe on personal freedoms.

Gemini Nano: A Powerful Tool Against Scams

Google’s new feature utilizes advanced AI to scan for signs of scamming behavior, such as requests for personal information, urgent money transfers, and payments via gift cards. By operating entirely on-device, Gemini Nano ensures that conversations remain private and supposedly (and in theory) do not need to be sent to external servers for processing.

The EU’s Perspective: From Child Safety to Potential Overreach

The European Union has been at the forefront of legislative efforts to regulate the online world, particularly in protecting children from sexual abuse material – or at least using that as an excuse to erode privacy. The EU’s “Chat Control” proposal, for instance, has sparked significant debate due to its implications for privacy and encryption. Initially, the proposal mandated that tech companies implement client-side scanning (CSS) (that’s on-device scanning) to detect bad content before it is encrypted and sent, which many critics argue could lead to mass surveillance, weaken end-to-end encryption, and ultimately be used to monitor and detect whistleblowers, dissidents, or anyone else a government would want to surveil.

While Google’s new scam detection feature is focused on phone calls, it sets a precedent for the use of advanced AI in real-time content monitoring. Some tech companies have pushed back against such invasive anti-privacy proposals. But now that Google has decided to implement this to detect scams, the floodgates could open to other forms of on-device scanning.

Now that the technology exists and is being used anyway, this development could embolden the EU to extend similar requirements to other forms of communication, such as emails, chats, and social media interactions, under the guise of preventing various types of harmful content, including “misinformation,” “hate speech,” and other activities. Today it’s scams, tomorrow it’s dissent.

It hints at a future when it’s not just the cloud that people need to be concerned about when it comes to surveillance and the monitoring of speech, they’ll also have to contend with whether they ultimately have privacy on their devices themselves.

Privacy Concerns and the Slippery Slope

The potential for such technology to be expanded beyond its initial purpose raises significant privacy concerns. If the EU were to mandate the use of client-side scanning for a broader range of content, it could lead to a scenario where all forms of digital communication are subject to constant monitoring. This could effectively dismantle the privacy protections afforded by end-to-end encryption, as all messages would need to be scanned for illegal content before being encrypted.

Critics argue that this approach could create a surveillance state, where personal communications are continuously scrutinized by AI algorithms, as mandated by governments. The risk of false positives, where legitimate content is incorrectly flagged as harmful, is another serious concern. Such errors could ultimately lead to unwarranted scrutiny of innocent individuals and chilling effects on free speech where even your own device isn’t safe from dissent monitoring.