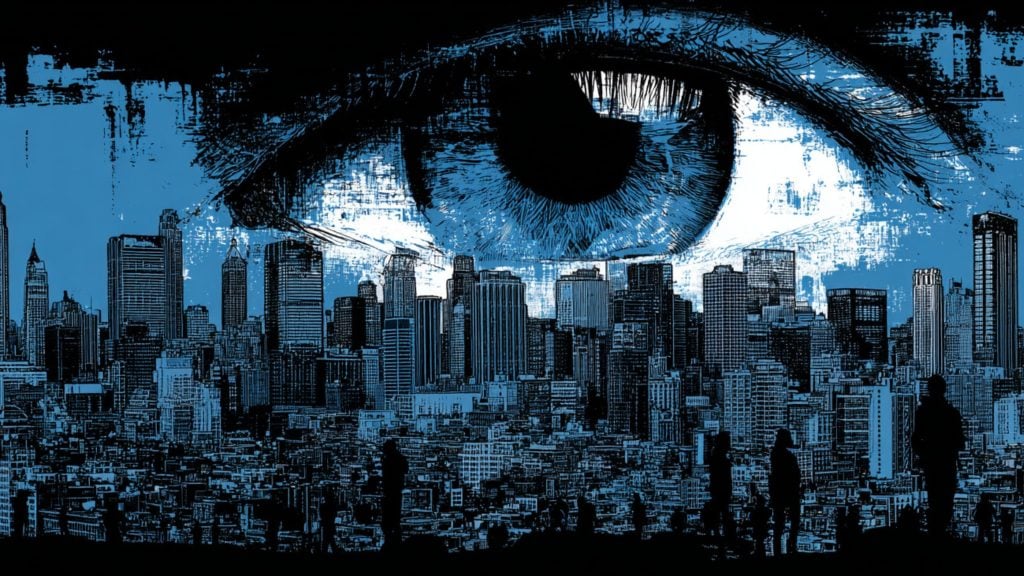

London, and the UK in general, are “renowned” for the sheer scale of indiscriminate mass surveillance carried out in the streets using surveillance cameras.

And despite the consistent criticism of the practice, the authorities there seem to be determined to continue to triple down.

Not all surveillance is made equal, either; the kind the Metropolitan Police are now testing is known as Live Facial Recognition (LFR), and a trial carried out this week in the busy Oxford Circus intersection has ruffled some feathers in the privacy and civil rights advocate community.

The idea behind facial recognition systems is to match a human face from an image or a video frame against a database. LFR is a real-time deployment of the tech that compares live camera feed(s) of faces against an existing watchlist.

The problem with using LFR in the UK, as many legal experts have been warning for a while now, is that although extremely privacy invasive, and technically not good enough to avoid false identification that can prove very costly for the affected individual – the existing laws to regulate and ensure oversight of the use of biometrics in this scenario are inadequate to say the least.

That should be a major, if not an insurmountable hurdle, particularly in a democracy, but as real life and real practices show, it has not been. Instead, critics are now comparing the state of affairs in the UK in this context to what has been happening in countries like China.

And the police are not alone in using LFR – the technology is also deployed in schools and privately-owned retail chains.

“The current use of live facial recognition is not lawful, and until the legal framework is updated, it will not be,” Carly Kind, director of the Ada Lovelace Institute has been quoted as saying by the Financial Times in late June, and adding, “You don’t want to become a society where you are using biometrics to surveil members of the public who can’t opt out of it.”

Cut to mid-July, and the Metropolitan Police think it is simply a matter of testing what experts are saying is not even lawful: the Scotland Yard ran an ad on the StarNow casting site that invited “actors” to show up and participate in “scientific research” whose results would help law enforcement understand how to use the technology “legally and fairly.”

However, there are no proper legal rules that currently define this activity, so the police referred broadly to public sector equality duty that is aimed at eliminating discrimination and advancing equality of opportunity as public bodies carry out their activities.

The ad said that participants would be walking through a LFR deployment while taking photos of faces, selfies, and videos (including while wearing face masks) in order to create a database for analysis.

A Scotland Yard spokesperson subsequently spoke about “ongoing legal responsibilities” to discover how the system performs, particularly regarding its accuracy, defining it as a way to prevent and detect crime, find wanted criminals, and also very loosely as technology that “protects people from harm.”

It was also said that participants in the “exercise” on Thursday consisted of police staff and people from an actor agency.

But Liberty, one of the rights groups that turned up at the site to protest and inform citizens about what was going on, was not convinced.

“If they’re using actors, why do they also need to use it on Oxford Circus with thousands of people also passing through,” they wondered.

Liberty also noted that another deployment that happened last week scanned 15,800 people. “It’s reasonable to assume a similar number of normal people will be scanned this time too without realizing, as well as the paid actors,” the group said.

Big Brother Watch activists were also there, and this organization reported about the goings-on in a series of tweets, calling the LFR exercise Orwellian, as well as proving to be inaccurate and misidentifying people in a number of instances – just as those campaigning against it have feared.

“Just two years ago in our landmark legal case, the courts agreed that this technology violates our rights and threatens our liberties. This expansion of mass surveillance tools has no place on the streets of a rights-respecting democracy,” said Liberty lawyer Megan Goulding.

Goulding argued that oppressive-by-design technology can never be regulated to remove all inherent dangers that come with it, adding that “the safest, and only” way forward is to ban facial recognition.

The Ada Lovelace Institute’s recent two-year review of the technology concluded that the UK should ban it immediately, until the country has laws in place that regulate the use of LFR.

The laws that currently cover the areas of human rights, privacy and equality are inadequate, the report by former Deputy London Mayor and barrister Matthew Ryder concluded, at the same time urging a moratorium until legal conditions have been created for the technology’s safe use.