Clearview AI has been in the news over the last couple of days after reports brought to light their scraping of everyone’s public images to use in their mass surveillance facial recognition software that law enforcement agencies around the world, including the FBI and the Department of Homeland Security are happily using.

Rogue police officers in the NYPD have apparently been using Clearview AI’s facial recognition software on their personal phones despite their department officially passing on the software last year and refusing to use it.

Apparently “dozens” of cops are using them unofficially. “They’re playing with fire. It’s going to catch up with them,” said an insider to the NYP. So even if Clearview AI goes out of business tomorrow, the database is still out there, being unofficially used to send people to jail, exacerbating the potential of false identification and the conviction of innocent people.

We covered yesterday the cease and desist letter that Twitter sent to Clearview AI accusing them of violating Twitter’s terms of service by using the images from users’ profiles and using it in such a fashion. Twitter demanded they immediately delete all of the data they obtained from Twitter.

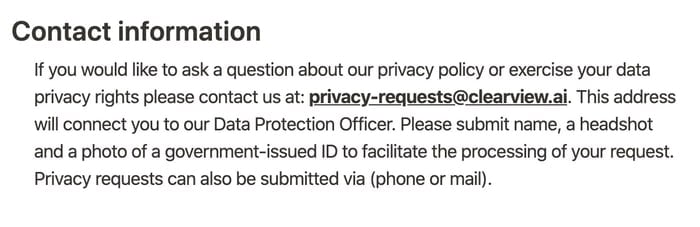

As it turns out, Clearview AI’s privacy policy provides an option for users to directly contact them and have their photos removed. The problem is, you have to “submit name, a headshot and a photo of a government-issued ID to facilitate the processing of your request.”

Obviously, we’re not recommending for anyone to do this, but rather we’re pointing out how preposterous it is that they’ll only delete the data they have on you if you send them more data, including your government-issued ID.

This story hasn’t sparked nearly as much controversy as it should, perhaps for obvious reasons. There is an eerie silence about such a critical and potentially consequential issue.

If you're tired of censorship and dystopian threats against civil liberties, subscribe to Reclaim The Net.