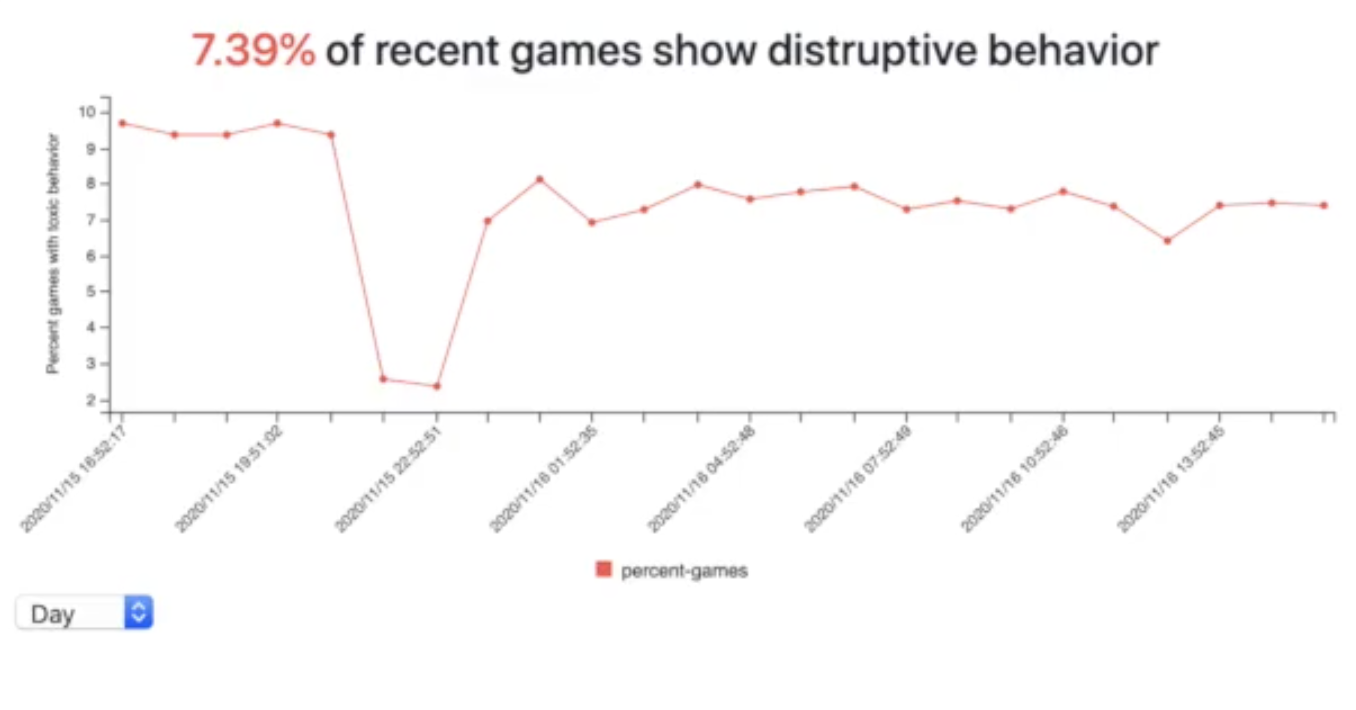

A technology company, Module, is now introducing AI online game moderators that can moderate in-game chat in real time. The aim in this case is to put a hold on what it calls the rampant player “toxicity” in online gaming.

Module’s AI moderator is being called ToxMod and is touted to be the first voice-native moderation service. While most moderators are text-based, the one developed by Module is voice-based. So this means that ToxMod can censor speech based on how something is said rather than just what is being said.

Mike Pappas, the co-founder and CEO of Module, said that developers are better positioned to take a nuanced approach towards dealing with “toxic” or “problematic” speech. Unlike the former approach where conversations were shut down altogether, the new approach with moderators, such as ToxMod, is to block certain words.

Much like Overwatch, even ToxMod is trying to learn more about what players say and why they say so – all by the means of machine learning and AI. Several intrinsic aspects such as volume, inflection, and emotion are being studied to identify any toxic elements from speech.

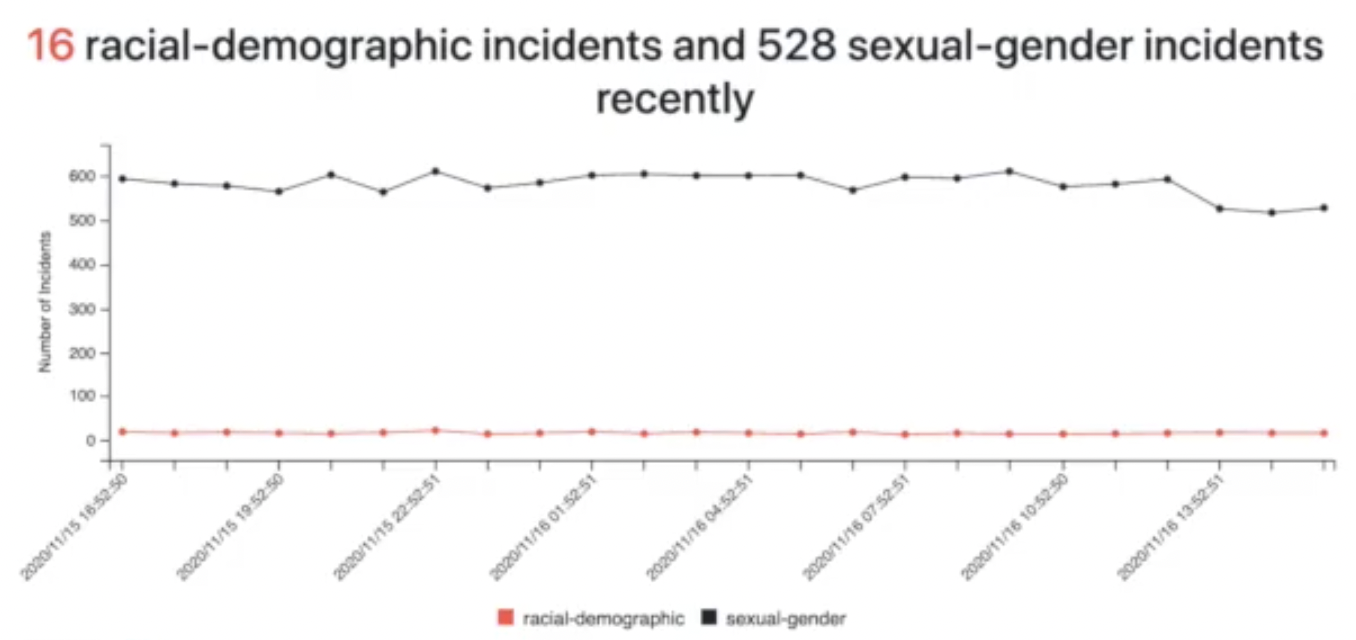

Demos of ToxMod show that the system can filter out “disruptive behavior” as well as “sexual-gender incidents.”

It is worth noting that many gamers usually consider such moderators that learn about the underlying patterns of speech to be invasive.

Nonetheless, ToxMod is not the only company to ever actively attempt to understand and moderate speech in online gaming. Even Sony’s popular PS5 allows users to record party chats and report to Sony. While Sony is leaving reporting “toxic” speech to users’ discretion, ToxMod is taking a more proactive approach and censoring in real time.

Click here to display content from YouTube.

Learn more in YouTube’s privacy policy.

If you're tired of censorship and dystopian threats against civil liberties, subscribe to Reclaim The Net.