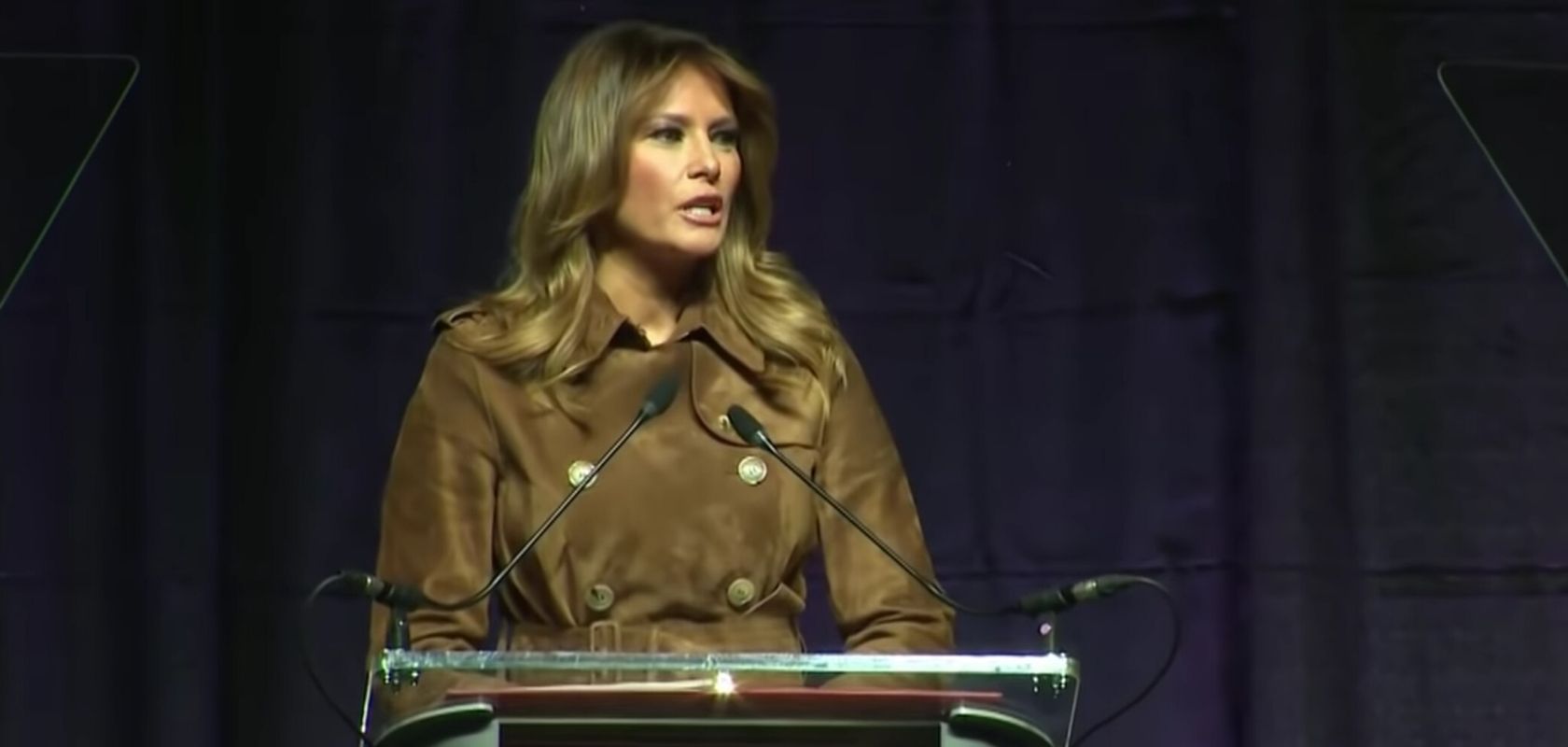

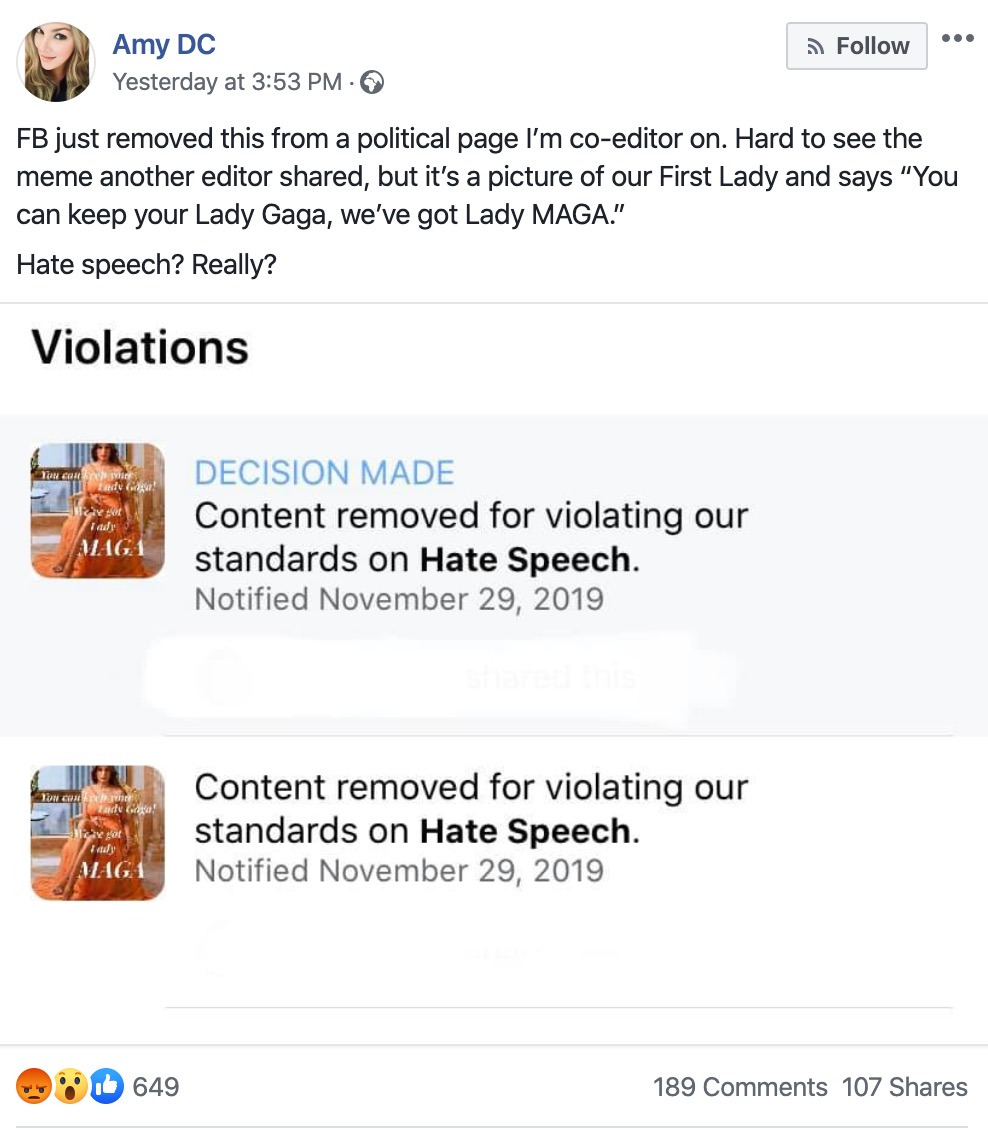

A Facebook user known as Amy DC has expressed concern when Facebook deleted a meme on the grounds of “hate speech”. The meme was a photo of the First Lady Melania Trump and featured the text “You can keep your Lady Gaga, we’ve got Lady MAGA”.

Amy – who, with others – runs a political Fan Page on the platform was confused to discover that yesterday, on November 29, the social media giant had removed the post, indicating that a decision had been made that the photo of Mrs. Trump with the aforementioned text constitutes “hate speech”.

According to Amy, the social graphic was posted by another editor of the page.

Amy said on Facebook:

“FB just removed this from a political page I’m co-editor on. Hard to see the meme another editor shared, but it’s a picture of our First Lady and says “You can keep your Lady Gaga, we’ve got Lady MAGA.”

Hate speech? Really?”

Facebook, in its attempt to tackle “hate speech” on the platform, has recently boasted that it has been able to remove 11.4 million pieces of “hate speech” between April and September 2019. But the company doesn’t provide a database for the posts that are removed on these grounds, making it impossible to know the types of posts Facebook are censoring.

Social media platforms are increasingly taking it upon themselves to police speech on the internet when it comes to “hate speech” – something that digital rights groups such as the EFF offer caution on:

“There seems to be near universal agreement that intermediaries that choose to take down “unlawful” or “illegitimate” content will inevitably make mistakes. We know that both human content moderators and machine learning algorithms are prone to error, and that even low error rates can affect large swaths of users.”

While it is easy to see how algorithms policing the internet can end up with situations such as this, it is to be noted that – according to the information that Amy provided – the “DECISION MADE” tag on the hate speech order against the Trump post is what usually appears when the moderation decision has been confirmed by manual review.

If you're tired of censorship and dystopian threats against civil liberties, subscribe to Reclaim The Net.