In their bid to exert control over super-influential platforms like Facebook, their critics consistently bring up several talking points, either as actual policy, or a form of warning and pressure: breaking the giants up is a popular one, but lately getting rid of the safe harbor status guaranteed by Section 230 is being increasingly mentioned as well.

And Democratic presidential hopeful Joe Biden has changed his mind on Section 320: once a supporter of the law that contains it, the Communications Decency Act (CDA), he now wants it repealed.

This is all to target Facebook, of course – with whom Biden is currently engaged in a “war” over its refusal to remove a Trump campaign ad that he considers to contain false information.

In a short CNN video Biden is heard saying that social media platforms need to be “more socially conscious” and also demonstrate “journalistic responsibility.”

“I, for one, think we should be considering taking away (Facebook’s) exemption that they cannot be sued for knowingly engaged on, in promoting something that’s not true,” Biden is heard saying.

The “exemption” that Biden seems to be referring to are the provisions of Section 320, that allow the internet as we know it to function the way it does today: by granting social media the status of platforms rather than publishers, meaning that they are not legally liable for content posted by their users.

Proponents of Section 230 say that taking away this privilege would actually result in mass censorship and content removal, rather than these companies deciding to stand up for their users is courts.

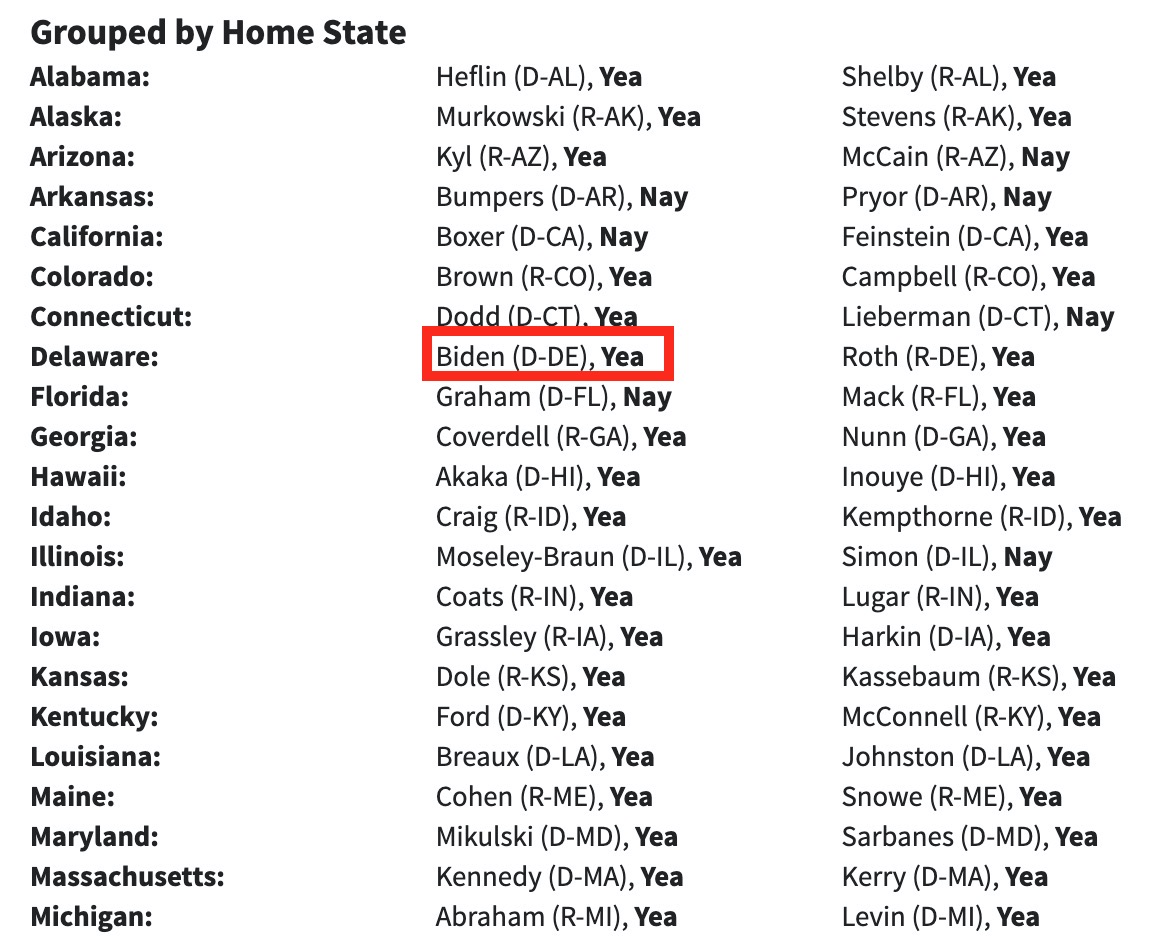

But in making his comments, Biden also betrayed a deep lack of understanding of the legislation that he once supported with his vote as a senator.

Although the former US Vice President thinks that Section 230 stands in the way of platforms moderating and removing content, the opposite is true. It’s no wonder people are confused about it though. Section 230 was recently blamed by the likes of the New York Times for enabling “hate speech online” – a claim that is not true. The claim was then retracted by The Times with a small correction at the end of the article that rendered the whole argument of the article wrong.

And while the law states that “no provider or user of an interactive computer service shall be treated as the publisher or speaker of any information provided by another information content provider” – it also balances it out with allowing these services to at the same time “restrict access to or availability” of content they see as offending, and even to technically restrict access to it.

The real key of Section 230 is in the phrase:

No provider or user of an interactive computer service shall be held liable on account of—

(A) any action voluntarily taken in good faith to restrict access to or availability of material that the provider or user considers to be obscene, lewd, lascivious, filthy, excessively violent, harassing, or otherwise objectionable, whether or not such material is constitutionally protected; or

(B) any action taken to enable or make available to information content providers or others the technical means to restrict access to material described in paragraph (1)

If a platform was held responsible for what their users say, the amount of censorship and screening that would entail would be like nothing we’ve seen before, and could create an impossible situation for smaller up-and-coming platforms that don’t have the resources to even attempt that. It would restrict the amount of user-generated content platforms would be willing to allow.

If you're tired of censorship and dystopian threats against civil liberties, subscribe to Reclaim The Net.