Facebook is accused of building a two-tier system of rules and standards around allowed content and speech: one for ordinary people, and another for the elites.

At the same time, the company is under fire for misleading the public and its Oversight Board about the program that makes this possible.

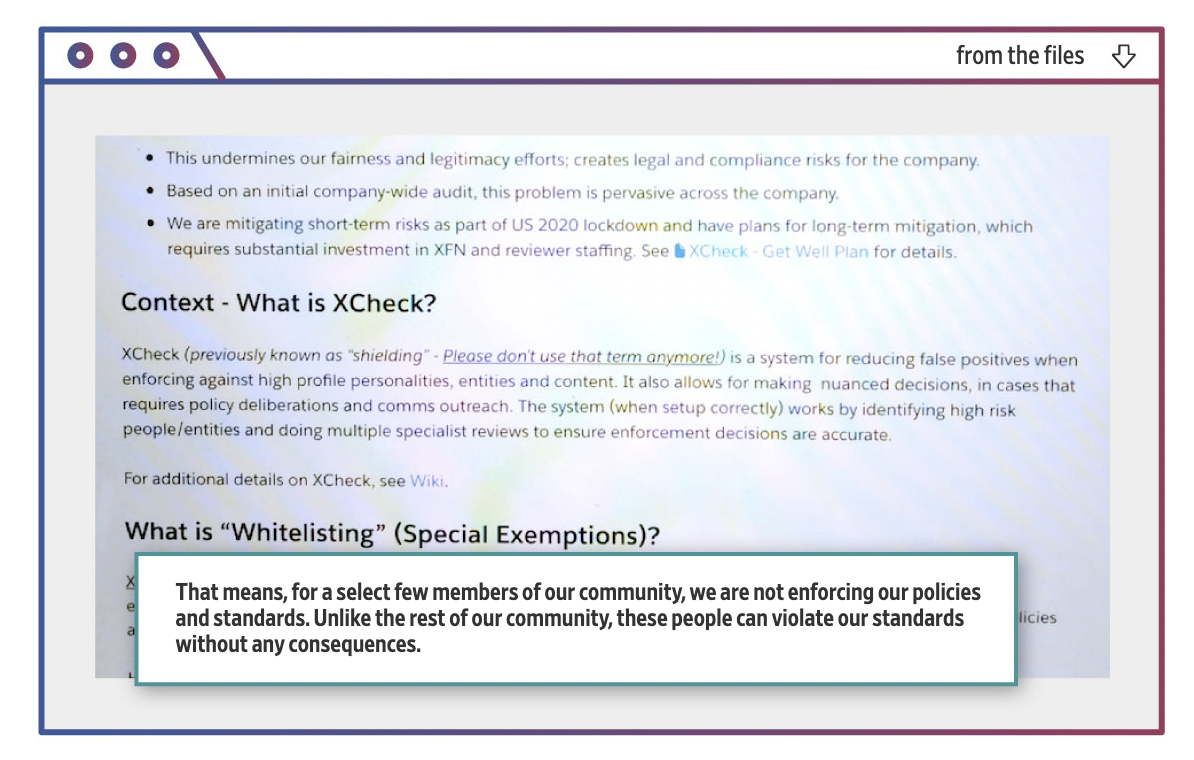

That’s according to a report in the Wall Street Journal, which said it had a chance to see documents detailing how the scheme, dubbed XCheck (cross check) works.

The idea behind it was to protect high profile politicians, celebrities and journalists on the network that is now said to have reached 3 billion users globally. But this very small group of privileged users has overtime become protected from Facebook itself and some of its own rules, said the report.

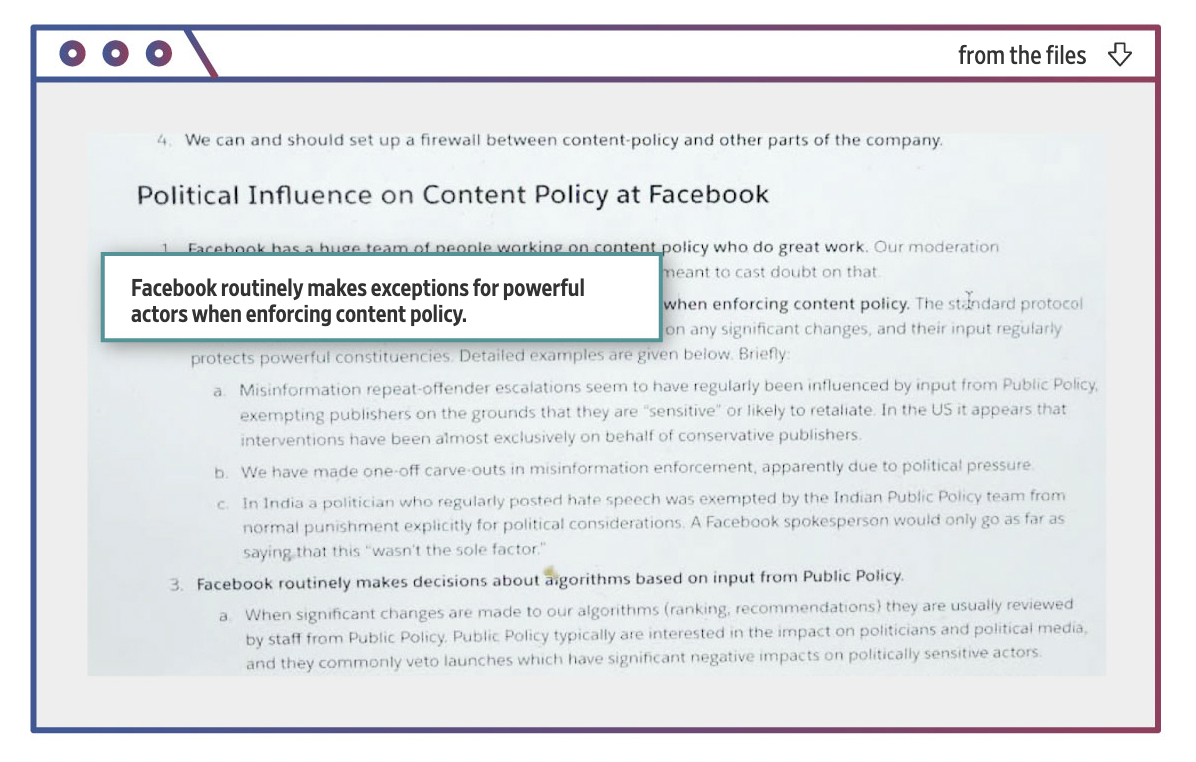

Using a variety of tools, including whitelisting which means complete exclusion from review, and delayed review of content by human moderators, XCheck reportedly openly favors VIP users to the point of allowing them, unlike the rest of those on the social media site, behavior that violates the giant’s standards, and “without any consequences.” That’s according to an internal confidential document looking into the program.

This is in marked contrast to how billions of “deplorables” are being treated on the platform, often falling victim to Facebook’s inadequate to say the least automatic moderation, as well as deliberate censorship.

In seeking to illustrate how VIP users are abusing this privilege, the newspaper for some reason chose only examples harmful to one side of the political divide in the US, citing posts containing anti-Clinton, anti-Covid vaccination, etc., content, and even one from former President Trump that are viewed as harmful – and would, in any case, be censored had they been posted by “regular” people.

Facebook says it is accepting criticism of XCheck, though it defends the scheme and says it has not been widely used, denying also that the Oversight Board had been kept in the dark.

Spokesperson Andy Stone also said the documents the report draws from are outdated. That would include the review that concluded Facebook was “not actually doing what we say we do publicly,” as well as that the program was in fact widely used.

“A lot of this internal material is outdated information stitched together to create a narrative that glosses over the most important point: Facebook itself identified the issues with cross check and has been working to address them,” said Stone.

If you're tired of censorship and dystopian threats against civil liberties, subscribe to Reclaim The Net.