The Electronic Frontier Foundation (EFF), a prominent US-based digital rights group, is once again stepping out of the comfort zone of most observers and media outlets in the US, to ask hard questions about the way content is treated and censored online by some of the world’s most powerful platforms.

For example: what process is behind the internet giants’ “moderation” efforts – and how exactly they justify their decisions to remove and ban content and/or creators. And, above all, how this murky process might be harming legitimate speech.

These might seem like the most basic and logical questions anyone would ask each time Facebook, YouTube, or Twitter talk about the way they police content – yet, these questions are surprisingly rare.

The EFF took note of a recent inquiry organized by the US Senate, during which tech giants were eager to paint a picture of their improved efforts to block extremist and terrorist content, apparently thanks to using automated, artificial intelligence-powered tools.

However, this message seems to require a lot blind faith while offering very little transparency into the process – as the giants announced making strides in dealing with extremist content, but failed to provide any meaningful insight into how they define and flag that content, and what they do to make sure that legitimate free speech in not harmed in this automated process.

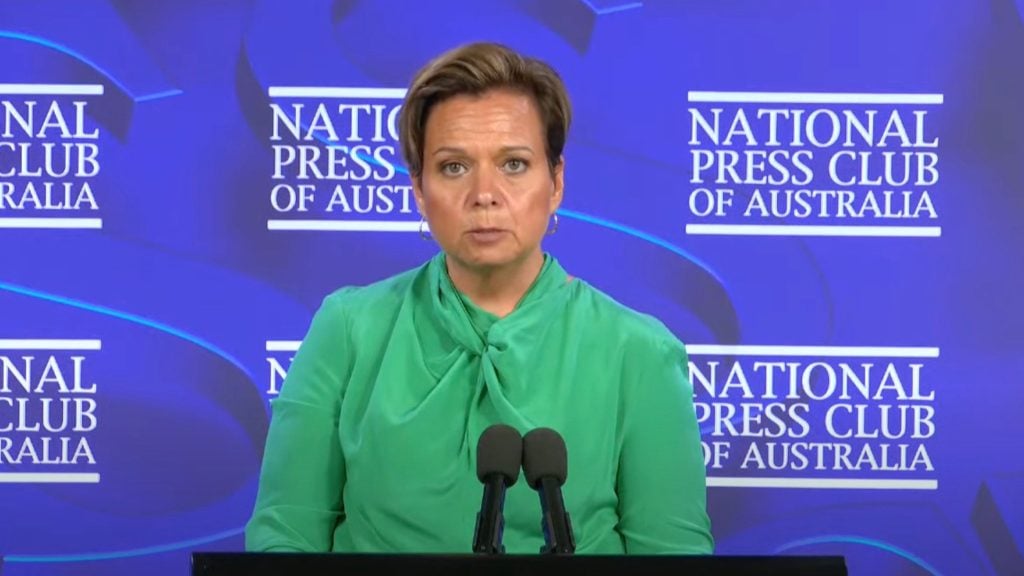

“Facebook Head of Global Policy Management Monika Bickert claimed that more than 99% of terrorist content posted on Facebook is deleted by the platform’s automated tools, but the company has consistently failed to say how it determines what constitutes a terrorist – or what types of speech constitute terrorist speech,” EFF noted.

The EFF post also serves to remind internet users that there’s a world beyond US politics of the hour – and that there, too, unclear and possibly unfair “moderation” conducted by these extremely powerful online platforms can cause harm, or at the very least, introduce confusion and uncertainty.

Thus, the EFF said, Facebook has been accused by Kurdish activists of taking the side of Turkish authorities when it comes to their decades-long conflict – while moderation policies apparently designed to remove extremist content are applied in a blanket manner that also often removes potential evidence of war crimes committed in crisis hotspots like Syria.

The EFF’s proposed remedy is for the tech giants to actually implement the Santa Clara Principles that they have formally endorsed.

The Principles say that platforms should:

- provide transparent data about how many posts and accounts they remove;

- give notice to users who’ve had something removed about what was removed, under what rules; and

- give those users a meaningful opportunity to appeal the decision.

If you're tired of censorship and dystopian threats against civil liberties, subscribe to Reclaim The Net.