YouTube’s controversial “hate speech” rules have caused mass collateral damage to the creator community.

History teachers, model makers, independent journalists, and other creators have found that their content is often demonetized or removed by YouTube’s hate speech algorithms because it doesn’t understand when words are being used in an educational or newsworthy context.

This has created an environment where creators are increasingly self-censoring when discussing news and current events because they fear that mentioning certain words, regardless of context, will trigger YouTube’s hate speech algorithms.

But despite the problems hate speech algorithms have caused for creators, a new report has revealed that federal funds are now being used to research online hate speech with the goal of providing “opportunities for training and evaluating hate speech classifiers.”

The research was conducted by the University of South California (USC) and was sponsored by the National Science Foundation (NSF) – a federal body that has an annual budget of over $8 billion and funds scientific research including “social sciences.”

The project focused on free-speech social network Gab and is titled: “The Gab Hate Corpus: A collection of 27k posts annotated for hate speech.”

It claims that “the growing prominence of online hate speech is a threat to a safe and just society” and notes that Facebook, Twitter, and Google’s hate speech policies, “that sometimes go beyond legal restrictions,” have resulted in researchers “devoting significant resources to developing detection algorithms for hate speech, abusive language, and offensive language.”

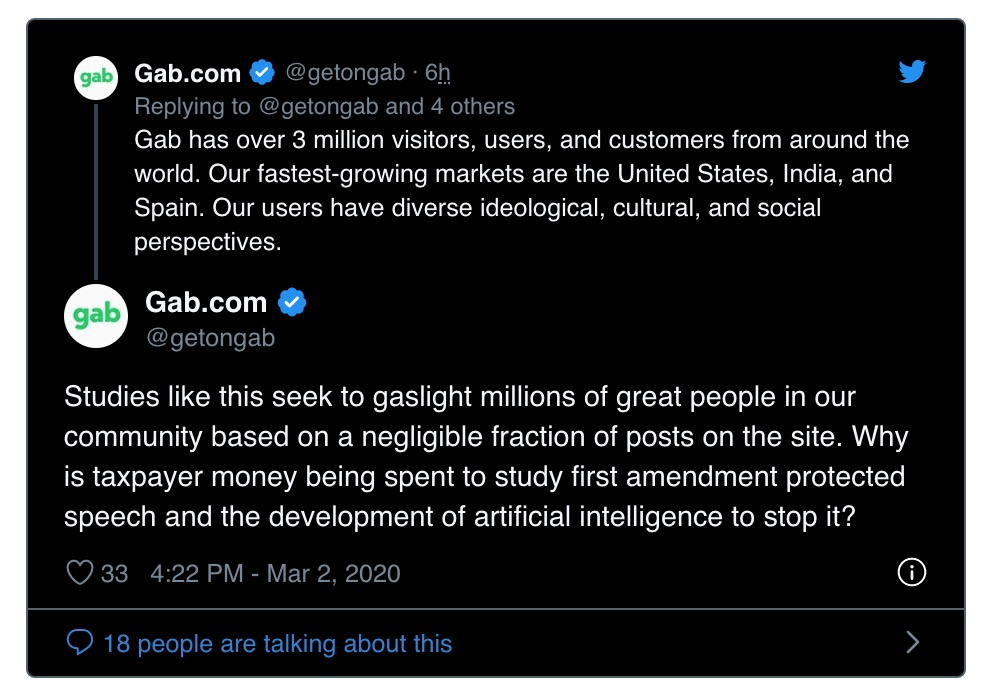

Gab has blasted the research project and said: “These researchers used federal funds to highlight 27,000 posts out of more than 93,000,000 on our site (0.029%) to promote the online censorship of American citizens.”

Gab also questioned whether President Trump, Republican Leader Kevin McCarthy, Senator Josh Hawley, and Trump’s 2020 Presidential Campaign Manager Brad Parscale were aware that this research was being used to “train AI to censor “hate speech” online, which SCOTUS has ruled is protected by 1A.”

“Why is taxpayer money being spent to study first amendment protected speech and the development of artificial intelligence to stop it?” Gab added.

If you're tired of censorship and dystopian threats against civil liberties, subscribe to Reclaim The Net.