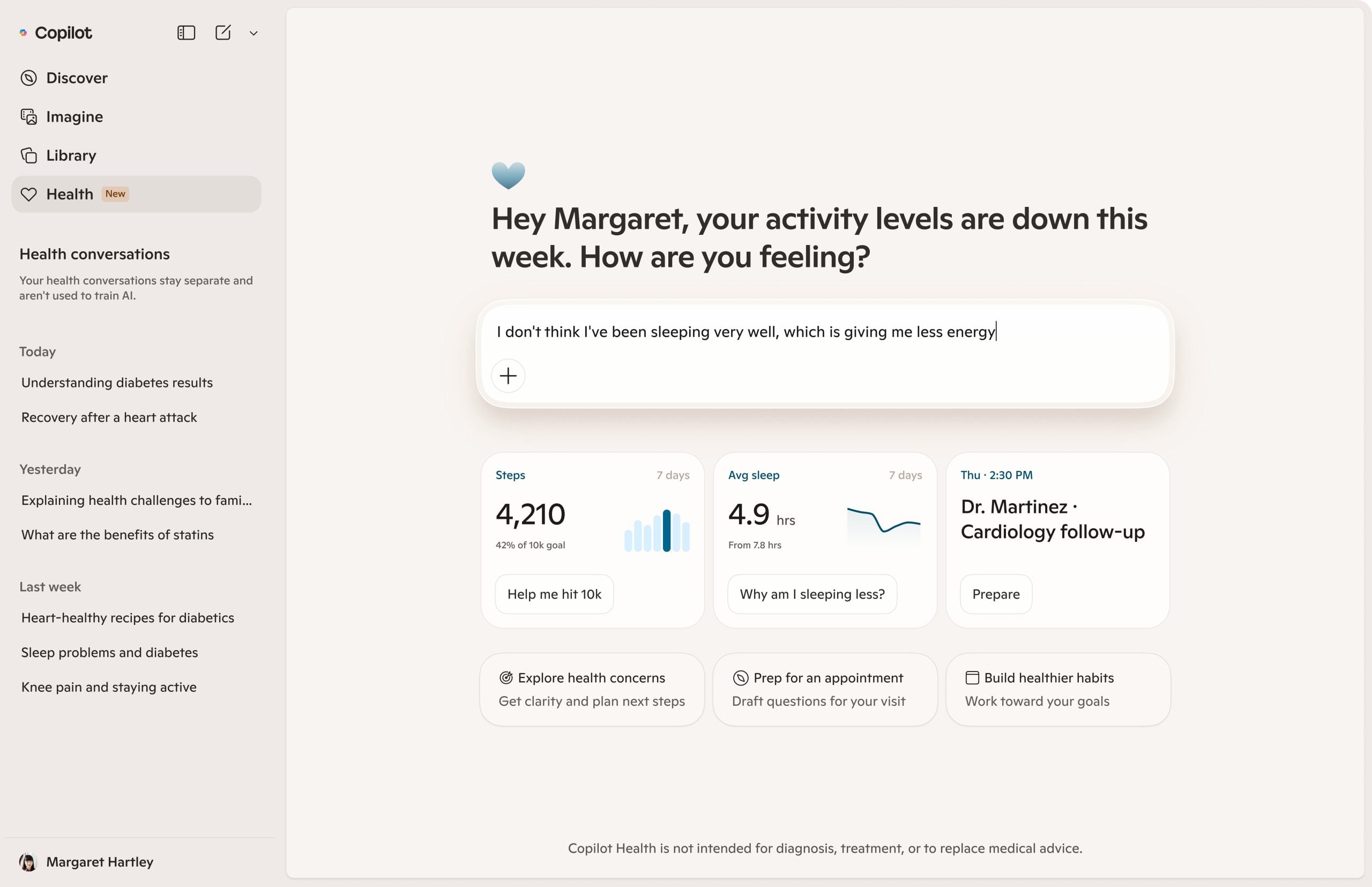

Microsoft wants your medical records. The company launched Copilot Health this week, an AI feature that pulls together personal health history from wearable devices, lab results, and hospital systems, then lets users ask questions about all of it in a single interface.

That’s a significant amount of sensitive data landing in the hands of a company that, notably, isn’t legally required to treat it the way your doctor is.

The feature sits inside Microsoft’s broader Copilot product and connects to medical records from over 50,000 US hospitals and healthcare organizations through a platform called HealthEx.

Reclaim Your Digital Freedom.

Get unfiltered coverage of surveillance, censorship, and the technology threatening your civil liberties.

Lab results come in through Function, a health tech company. Wearables from Apple, Oura, Fitbit, and more than 50 other manufacturers can link directly to the dashboard.

The homepage aggregates step counts, appointment reminders, and other health signals depending on what users opt to share. It also offers access to provider directories, letting users search for doctors by specialty, location, language, and accepted insurance.

Microsoft frames this as understanding your health, not replacing your doctor. What it’s actually building is a centralized health surveillance layer that sits above the fragmented ecosystem of hospitals, labs, and wearable companies and aggregates everything into one place.

That may be genuinely useful. It also concentrates a significant amount of sensitive personal data in a product that is not HIPAA compliant.

That last point matters more than Microsoft’s press release suggests. The Health Insurance Portability and Accountability Act exists to set security requirements for electronic health data and restrict how it can be used and disclosed.

Hospitals and doctors who violate HIPAA face fines and potential criminal liability. Microsoft faces neither, because it doesn’t have to be HIPAA compliant to run Copilot Health.

Dr. Dominic King, VP of health at Microsoft AI, addressed this directly ahead of the launch: “HIPAA is not required for a direct-consumer experience like this when you’re using your own data.”

He went on to say: “However, at Copilot, we think it’s incredibly important that we’re meeting all the best standards out there. So, we will be announcing some updates here on our standing in terms of what are called ‘HIPAA controls.'” What those updates actually entail, King didn’t say.

Microsoft does point to an ISO 42001 certification, an international standard covering responsible AI use, traceability, and transparency. It’s a real certification, shared with Microsoft 365 Copilot and Microsoft 365 Copilot Chat. It’s also not a substitute for HIPAA controls, and it doesn’t restrict what Microsoft can do with health data the way federal law restricts your physician.

The company says health chats are “isolated from general Copilot and kept under additional access, privacy, and safety controls,” and that data from those chats isn’t used to train its AI models.

Users can delete their health data or disconnect data sources at any time. These are big commitments. They’re also voluntary ones, which means Microsoft can revise them at any point by updating its privacy policy. There’s no regulatory backstop if it does.