The recent mass shootings in New Zealand were a tragedy but the attempts by social media companies and ISPs (Internet Service Providers) to suppress information about the event have been completely overblown. And now Facebook is suggesting that it’s going to be implementing even more AI (Artificial Intelligence) based censorship in response to this horrific event.

In a new blog post, Facebook provided some updates on the changes it’s going to be making following the New Zealand attacks. These changes include:

- Improving matching technology to stop the spread of viral videos of this nature which includes audio-based technology that identifies variants of videos

- Exploring whether AI can be used for these cases

- Continuing to combat hate speech and hate groups of all kinds using proactive detection technology

These changes are a direct response to the pressure the company has been facing following the shootings which were live streamed on Facebook.

When you consider Facebook has over 2 billion monthly active users and that the mass shootings were one of the most talked about news events at the time, Facebook was actually quite effective at stopping the spread of the violent videos on its platform. In total:

- The original video of the shootings was viewed fewer than 200 times during its live broadcast

- The original video of the shootings was viewed about 4,000 times in total before being removed

- Other videos featuring the shootings were viewed around 300,000 times before being removed

This means that less than 0.015% of all Facebook users saw a video featuring the attacks on the platform.

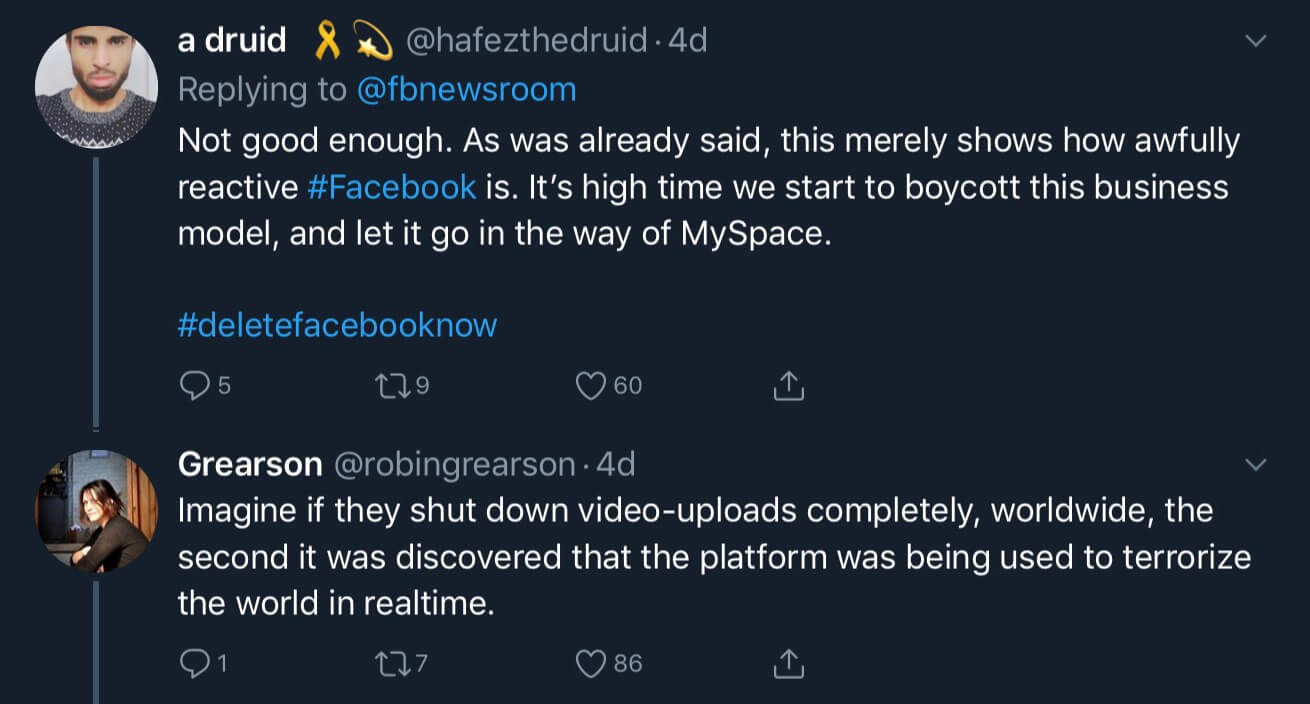

However, a lot of people were not satisfied with Facebook’s efforts and demanded that the company do more, so now we’re seeing these changes from Facebook.

Some may see these changes as a good solution for stopping the spread of violent content on Facebook. However, as technology becomes an increasingly prevalent part of our lives, we need to accept that there will always be small numbers of people that do horrible things. These people will always be able to use this technology for their own purposes and we can’t blame the social media companies for the actions they take.

If we continue to blame the social media companies, they’ll be forced to enact more aggressive content moderation policies, and these policies will inevitably lead to lots of people being censored because of a few people’s destructive actions.

We only need to look at the way social media companies, ISPS, and the New Zealand government have reacted to this situation to see how quickly censorship gets out of hand. Websites such as Dissenter, which had no direct connection to the shootings, were blocked by many of New Zealand’s ISPs. YouTube also disabled its search filtering features following the mass shootings. Millions of users have already lost access to their favorite sites and features because of one person’s actions.

Using AI as a solution to this problem will only make things work. YouTube’s copyright detection systems are notorious for incorrectly taking down content and any AI that Facebook rolls out will be plagued with similar problems.

Another problem with this AI based solution is that while it’s being introduced to tackle violent and extreme content, once the technology is out there, it can be used to take down other types of content too. We’ve seen social media sites censor people for relatively innocuous things including so-called “misgendering” and using the phrase “learn to code.” If the logic for innocuous bans like these gets programmed into an AI solution, we could see millions of people being censored for saying things that offend a small group of people.

Overall, using AI and focusing on censoring content is a very bad idea. We need to accept that small amounts of horrible content will always exist on the internet and instead ensure that the rights of people who are using the internet legally are respected.

If you're tired of censorship and dystopian threats against civil liberties, subscribe to Reclaim The Net.