FC Barcelona got fined €500,000 ($579,219) for scanning the faces and recording the voices of over 100,000 members without doing the legal homework first.

Spain’s data protection authority, the AEPD, found the club had deployed biometric identity verification during a membership census update and processed all of it without a valid Data Protection Impact Assessment.

Members renewing their details remotely were required to either submit a facial scan through their device camera or record their voice. Both systems were live, both were processing biometric data at scale, and the documentation Barcelona produced to justify any of it didn’t meet the bar GDPR sets for high-risk processing.

Article 35 of the GDPR requires organizations to conduct a DPIA before deploying any system likely to create a high risk for individuals. Biometric data used for identification qualifies automatically.

Reclaim Your Digital Freedom.

Get unfiltered coverage of surveillance, censorship, and the technology threatening your civil liberties.

Processing that touches more than 100,000 people, including minors, qualifies. Using new technologies qualifies. Barcelona’s system hit all three. The AEPD concluded the club’s documentation was missing the essential components of a genuine assessment: no real necessity and proportionality analysis, no adequate evaluation of what the processing actually risks for the people whose faces and voices it captured.

The AEPD’s decision in case PS-00450-2024 makes one point with particular clarity: consent doesn’t substitute for a DPIA. Barcelona had asked members to agree to biometric data collection, and members had agreed.

That agreement is legally irrelevant to the separate procedural obligation to assess risk before the system goes live. The GDPR treats them as independent requirements. Satisfying one doesn’t discharge the other.

What a valid DPIA actually requires, according to the decision, is a clear description of the processing, a genuine necessity and proportionality assessment, a detailed risk evaluation, proposed mitigation measures, and a residual risk assessment after mitigations are applied. Organizations that generate DPIA documentation as a compliance checkbox, without substantively working through those questions, remain exposed regardless of what consent language they put in front of users.

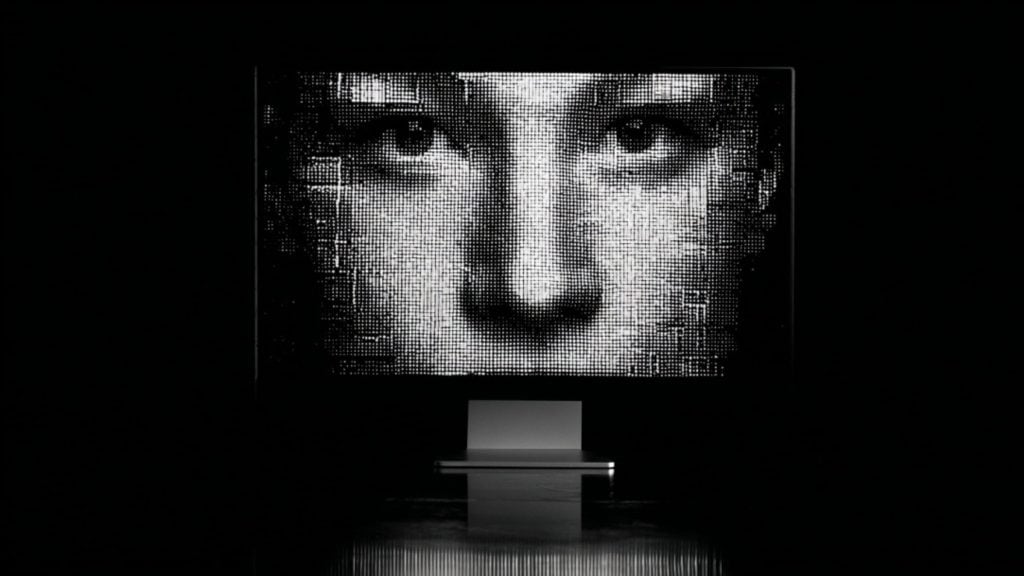

The appetite for facial biometric data has become near-universal across industries, and the Barcelona case lands in a moment when that appetite is accelerating faster than the rules meant to govern it.

Banks deploy facial recognition for customer onboarding. Retailers use it for age verification at the point of sale. Hospitals scan patients at check-in. Stadiums have replaced tickets with face scans.

The framing is always convenience, security, or safety. But organizations across every sector are building permanent biometric records of the people they serve, often without seriously asking whether they need to.

Facial recognition now accounts for nearly 30% of biometric authentication usage among American users, with roughly 131 million daily interactions processed across the country. More than half the US population engages with recognition systems regularly. That infrastructure touches the daily lives of hundreds of millions of people, most of whom have little meaningful understanding of what’s being captured or where it goes.

The fundamental problem with all of this is one that organizations consistently downplay: facial biometrics cannot be changed if compromised, creating a permanent vulnerability that persists throughout an individual’s lifetime. Unlike passwords, credit cards, or even social security numbers, facial features represent permanent identifiers that cannot be reset or replaced. When a company stores your face geometry and gets breached, you don’t get to change your face. The exposure is for life.